Audio Spatialization Research at CNMAT (2019)

From concerts and research conducted in the main room of our main facility at 1750 Arch Street in Berkeley, CA to large-scale installations in concert halls at UC Berkeley and beyond, enabling the exploration of a spatial dimension in music composition remains a central feature of CNMAT's research agenda.

The problem of sound spatialization is a very rich and complex topic. In the live concert production context, mimicking the directional propagation characteristics of an acoustic source presents myriad challenges on multiple fronts. First, regardless of the spatialization technique being used, a large number of loudspeakers are typically required. Even if the requisite number of loudspeakers can be sourced, a very specialized technical infrastructure is required to coordinate and condition the signals to each loudspeaker. These equipment resources are generally beyond the capabilities of the average venue and require the attention of highly specialized production staff. Second, even under ideal conditions with a large deployed and well-tuned sound system, rendering unique signals for each speaker in real-time requires significant computational resources.

In recent years, CNMAT has invested in infrastructure to address these concerns. First, and most significantly, we have outfitted our main performance facilities with permanently installed loudspeaker arrays. The Main Room at CNMAT features a unique 8.8 channel surround array developed in cooperation with Meyer Sound. This provides composers and researchers with a convenient platform for experimentation and for developing works targeted for other venues. Alongside our main facility, we have worked closely with the UC Berkeley Music Department to develop Hertz hall as a live spatialization performance venue. Several times per year we host concerts in this space with 8 or more channels of surround audio.

We have also developed a computational infrastructure specifically designed to meet the demands of live spatial audio rendering. In 2017, we commissioned and built the CNMAT Spatial Audio Server or “SPAT Machine”. This is a specially built computer that can handle up to 300 channels of audio input/output over optical MADI connections. It is designed for real-time spatial audio processing of input signals for arbitrary performance contexts and sound system configurations.

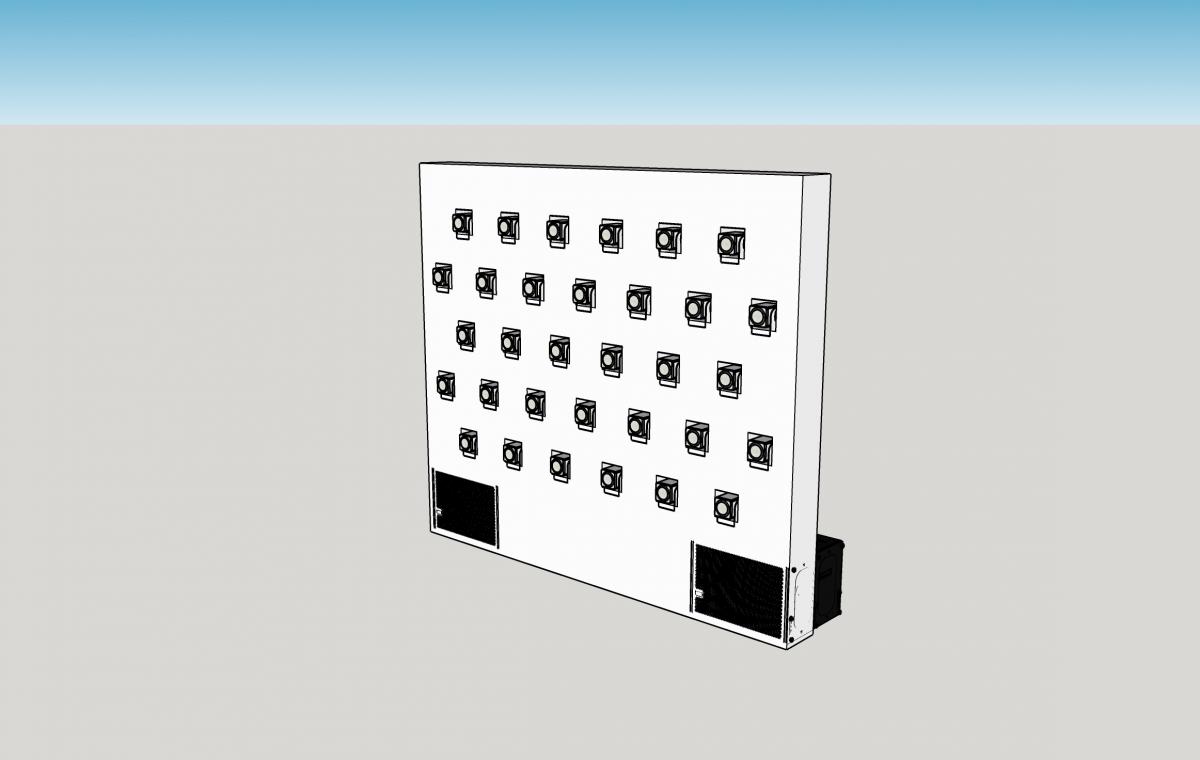

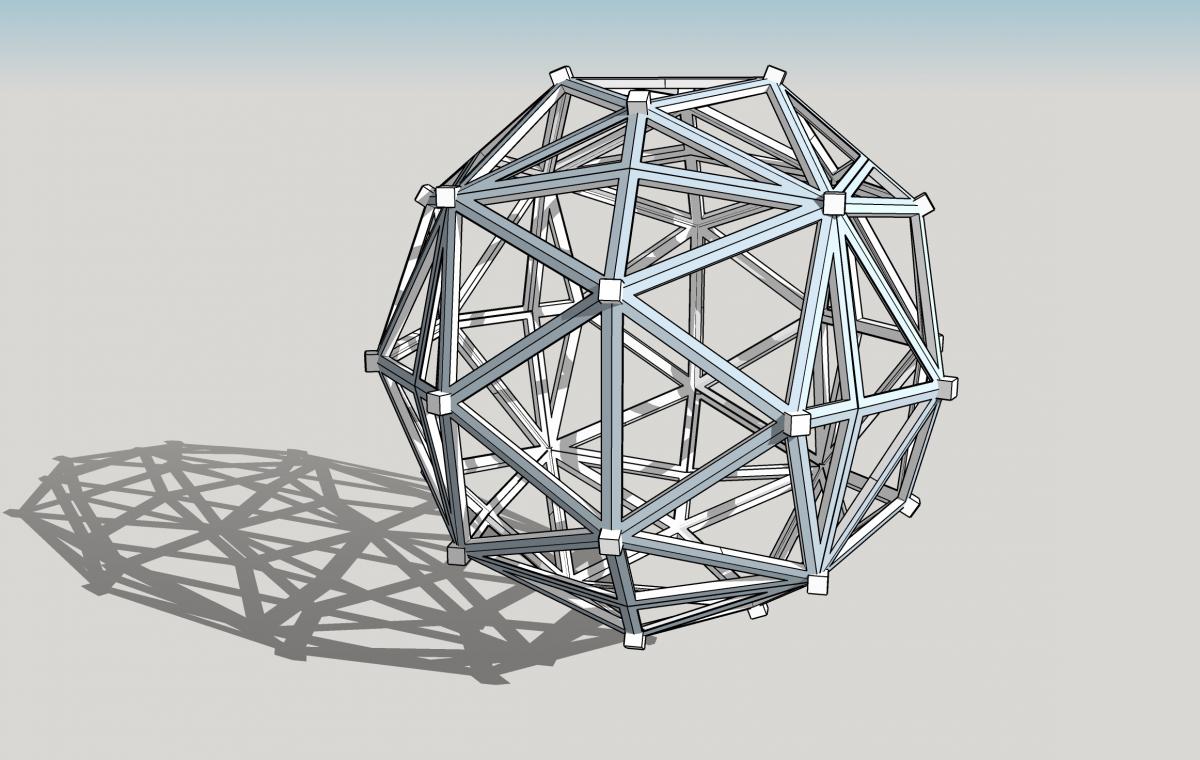

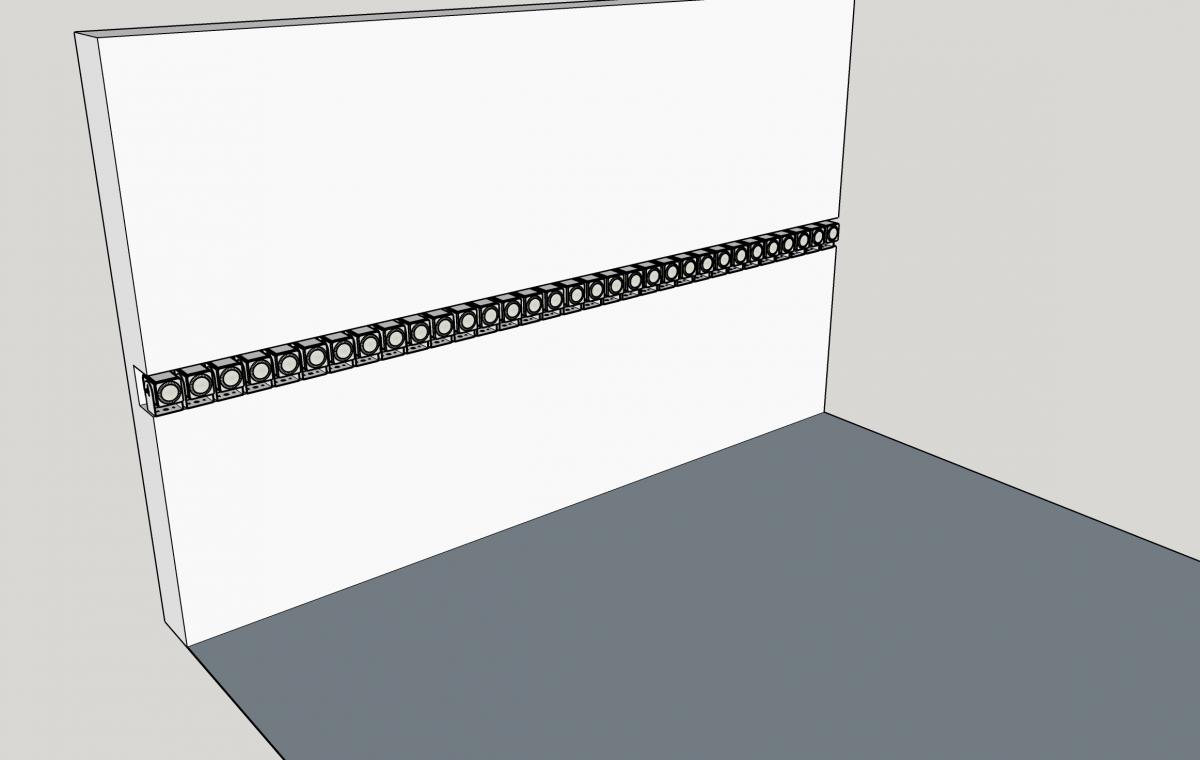

In 2018, CNMAT secured a grant from the UC Berkeley Student Technology Fee to build a Mobile Multi-Purpose Surround Array (MMPSA). This infrastructure was built to provide a mobile spatial audio platform for student concerts in spaces outside of CNMAT and Hertz Hall and can be deployed in combination with existing, above-named infrastructure. The system mirrors the capabilities of the CNMAT main room but is designed to be quickly deployed in remote locations, thereby enabling the creation of an immersive spatial audio venue nearly anywhere it is required. This system is also highly configurable and expandable to enable experimentation with various loudspeaker layouts for use in ambisonic and wave-field arrays. Pictured below is the MMPSA deployed as part of a small wavefield installation. Additionally, we have begun to imagine a number of layouts for this array including several cable-stayed structures for creating immersive, geodesic arrays for use in ambisonic installations as well as rigid installations as 1D and 2D wavefield emitters.

Taken as a whole, these infrastructure investments have allowed us to make great strides in concert production of spatialized sound and we look forward to improving our capabilities with further rounds of investment in these systems. However, in the meantime we have recognized the utility of some of this infrastructure in addressing complementary spatial audio problems with applications in augmented and virtual reality. Since 2018 we have been conducting research into emerging problems in binaural spatial audio for virtual contexts. We have consistently found that within current commercial AR/VR applications audio is severely underprivileged relative to visual components. To address this, we have devised a computational strategy for enabling binaural spatialization of dozens of audio sources within an AR/VR scene. In contrast to the audio engines in Unity and Unreal Engine which are designed to support only a few channels of audio, our approach splits the audio rendering onto two separate computer systems that can accommodate the synthesis and spatialization of sounds within the auditory scene.

Coordination of these tasks across the various platforms is accomplished via Open Sound Control (OSC) and audio is passed between the various systems with optical MADI connections. The result is a system that can synthesize unique sounds for object interactions in the authored AR/VR scene that are realistically spatialized within the binaural sound field, accounting for the user’s head movements, head-related transfer function (HRTF) and unique position within the scene. We have demonstrated this system with 64 individual audio streams (this limitation imposed by the 64-channel limit of optical MADI).